Share this

Are You Using Clean Data to Drive Business Decisions?

by Alan Suchan on Thu, Aug 04, 2016 @ 04:04

You’ve installed Google Analytics on your website and have begun gathering data on how people are interacting with and using your website. That’s a great first step, but there’s some additional setup work that should be done to ensure you are using clean data, as there are a few things that can skew your data.

Internal Traffic

A lot of people may not realize it initially, but YOU are often skewing the data for your own website. It’s likely that you and other employees of your company are viewing pages on your site daily. The same can also be said for any external vendors or agencies you may be working with.

Solution: Create a filtered profile in Google Analytics. To find out what you need to exclude, simply go to Google and search “What is my IP?” The answer box at the top should tell you what your IP address is. Make a note of the address, then go back into Google Analytics, create a new profile and add an IP filter.

Be sure to repeat these steps if your business has any additional office locations, or you are also filtering out any other external vendors. Just have them send you their IPs and repeat the process. Note: Always create a new profile to apply the filters to and leave the existing profile unfiltered so that raw data is available if it is ever needed. Also, filters are not retroactive, so any changes you make are only available for future reports.

Spam Bot Traffic

Small to medium sized businesses often have another issue with robots skewing their website data behind the scenes. “Spam bots” are robots that run scripts that cause the Google Analytics tracking code to fire and log a visit to a site without anyone ever actually visiting the site. This is often done dozens of times a day, but multiplied over weeks and months, could lead to a pretty large discrepancy in the number of visits to your site.

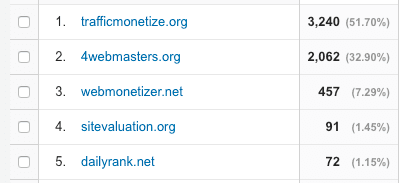

How do you know if you’re being hit by bots? Go to the Referrals report in Analytics. The spam should be pretty easy to spot as seen in the example below.

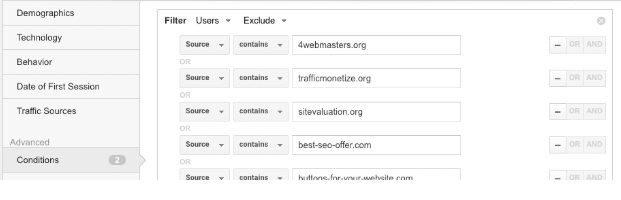

Solution: Create a custom segment in Google Analytics to remove these visits from reports. For this I usually have the segment builder and the referral report open in two separate windows on two separate monitors. In the “Advanced” section of the segment builder, click on “Conditions,” then change the options to ensure you are excluding users where the source contains the domains you view as spam in the referral report. Keep adding the spam domains by clicking on “OR” on the right part of the screen until all of the spam referring domains are included.

Unlike filters, custom segments can be applied to historical data. As for the root of this issue, Google has said they are aware of the issue, but to date, not much has been done to exclude these visits on their end.

Current Clients

While not necessarily negatively affecting your site metrics, you may be interested in tracking how new customers or prospects are interacting with your site versus existing clients. After all, they’re likely to browse your site in completely different ways.

Solution: A custom segment is the way to go here as well. For one of our clients, they want to focus on the quality of visits they receive from prospects and want to remove any current clients, employees and suppliers from reports.

In this example, it helps to have the URL structure of your site change to include a directory that is only accessible to logged in users. For example, logged in users to example.com could be directed to example.com/logged-in/home. To remove these visitors from your reports, create a custom segment the same way as above. Set your conditions to exclude sessions where the page contains /logged-in, as seen in the screenshot below.

We have been able to successfully implement this for a number of our clients and the new view in the data has been extremely valuable. That is the great part of custom segments - they are much more flexible than filters and allow you to slice and dice historical data in virtually limitless ways.

What else have you seen skewing your data and what have you done to fix the issue?

Share this

- Inbound Marketing (125)

- Manufacturing (82)

- Lead Generation (70)

- Website Design & Development (57)

- Social Media (46)

- Online Brand Strategy (38)

- eCommerce (33)

- B2B Marketing (26)

- Expert Knowledge (26)

- Digital Marketing (24)

- Company Culture (22)

- Content Marketing (16)

- Customer Experience (15)

- Metrics & ROI (15)

- Search Engine Optimization (15)

- Marketing and Sales Alignment (12)

- Transportation and Logistics (10)

- Content Marketing Strategy (9)

- SyncShow (9)

- Digital Sales (8)

- Email Marketing (8)

- Lead Nurturing (8)

- Digital Content Marketing (7)

- Mobile (7)

- Brand Awareness (6)

- Digital Marketing Data (4)

- Video Marketing (4)

- General (3)

- LinkedIn (3)

- Professional Services (3)

- Transportation Insights (3)

- News (2)

- PPC (2)

- SEO (2)

- SSI Delivers (2)

- Account-Based Marketing (1)

- Demand Generation (1)

- Facebook (1)

- High Performing Teams (1)

- Instagram (1)

- KPI (1)

- Marketing Automation (1)

- Networking (1)

- Paid Media (1)

- Retargeting (1)

- StoryBrand (1)

- Storytelling (1)

- Synchronized Inbound (1)

- April 2024 (1)

- March 2024 (3)

- January 2024 (2)

- December 2023 (4)

- November 2023 (3)

- October 2023 (1)

- September 2023 (4)

- August 2023 (3)

- July 2023 (2)

- June 2023 (2)

- August 2022 (2)

- July 2022 (2)

- June 2022 (1)

- March 2022 (2)

- February 2022 (1)

- January 2022 (2)

- October 2021 (1)

- June 2021 (1)

- May 2021 (1)

- March 2021 (1)

- December 2020 (1)

- October 2020 (2)

- September 2020 (1)

- August 2020 (3)

- July 2020 (3)

- June 2020 (4)

- May 2020 (2)

- April 2020 (3)

- March 2020 (9)

- February 2020 (5)

- January 2020 (6)

- December 2019 (5)

- November 2019 (7)

- October 2019 (6)

- September 2019 (8)

- August 2019 (5)

- July 2019 (5)

- June 2019 (3)

- May 2019 (2)

- April 2019 (1)

- March 2019 (2)

- February 2019 (1)

- January 2019 (2)

- November 2018 (1)

- October 2018 (1)

- September 2018 (1)

- August 2018 (1)

- May 2018 (2)

- March 2018 (1)

- November 2017 (1)

- October 2017 (1)

- September 2017 (1)

- August 2017 (2)

- July 2017 (2)

- May 2017 (1)

- April 2017 (1)

- February 2017 (1)

- January 2017 (1)

- December 2016 (1)

- November 2016 (8)

- October 2016 (7)

- September 2016 (2)

- August 2016 (2)

- July 2016 (6)

- June 2016 (3)

- May 2016 (4)

- April 2016 (6)

- March 2016 (6)

- February 2016 (7)

- January 2016 (7)

- December 2015 (6)

- November 2015 (2)

- October 2015 (3)

- September 2015 (2)

- August 2015 (4)

- July 2015 (9)

- June 2015 (9)

- May 2015 (8)

- April 2015 (8)

- March 2015 (9)

- February 2015 (7)

- January 2015 (8)

- December 2014 (7)

- November 2014 (7)

- October 2014 (5)

- September 2014 (4)

- August 2014 (4)

- July 2014 (5)

- June 2014 (4)

- May 2014 (5)

- April 2014 (4)

- March 2014 (7)

- February 2014 (9)

- January 2014 (7)

- August 2013 (2)

- July 2013 (4)

- June 2013 (6)

- May 2013 (7)

- April 2013 (7)

- March 2013 (8)

- February 2013 (5)

- January 2013 (7)

- December 2012 (4)

- November 2012 (4)

- October 2012 (2)

- September 2012 (1)

- July 2012 (1)

- April 2012 (4)

- March 2012 (5)

- February 2012 (2)

- January 2012 (3)

- November 2011 (1)

- May 2011 (3)

- April 2011 (1)

- March 2011 (1)

- February 2011 (1)

- December 2010 (2)

- November 2010 (3)

- August 2010 (1)

- July 2010 (1)

- May 2010 (2)

- April 2010 (1)

- January 2010 (1)